Face to Face with GenAI Hype

Author: Damir Kahvedžić, Ph.D.

My wife came to me a couple of weeks ago needing to write a last-minute presentation for her work. She asked “Can we use CoPilot? I hear it can generate a presentation for me.” “Sure” I said “Your company has licensed it on your laptop. Enter a prompt and it will make slides in PowerPoint”. “Perfect!” she said. “Let’s do that”. We ran to the laptop, typed in our prompts and CoPilot dutifully created the presentation and content. It was immediate and impressive. It had the correct length, perfect formatting, a beginning middle and end and no typos. It was perfect.

My wife hated it. The content was generic, it was bland and not at all what she expected. “Where is the detail, it’s missing statistics!” She said. The reality hit her that CoPilot helped her get started but she as a subject matter expert had to finish it. She ran head-first into Amara’s Law[1].

“WE TEND TO OVERESTIMATE TECHNOLOGY IN THE SHORTTERM BUT UNDERESTIMATE THE EFFECT IN THE LONGTERM”

You can’t really blame her excitement though. This is where we were a few years ago. We were promised that we could type in our criteria and the LLMs will extract ALL privileged documents for us. It would speed up or even end review, lower costs, upend our workflows. But survey after survey has shown that even though the excitement for AI is palpable and that teams want to use it, there are lot of AI adoption challenges ahead. Costs, Data Governance, Hallucinations! Not to mention that we are still waiting for that killer app. How can we foster adoption? How can we bridge the gap between the expectations of end users, like my wife, to the reality that AI service providers like ProSearch can provide? Where in the adoption process are we?

Gartner Hype Cycle

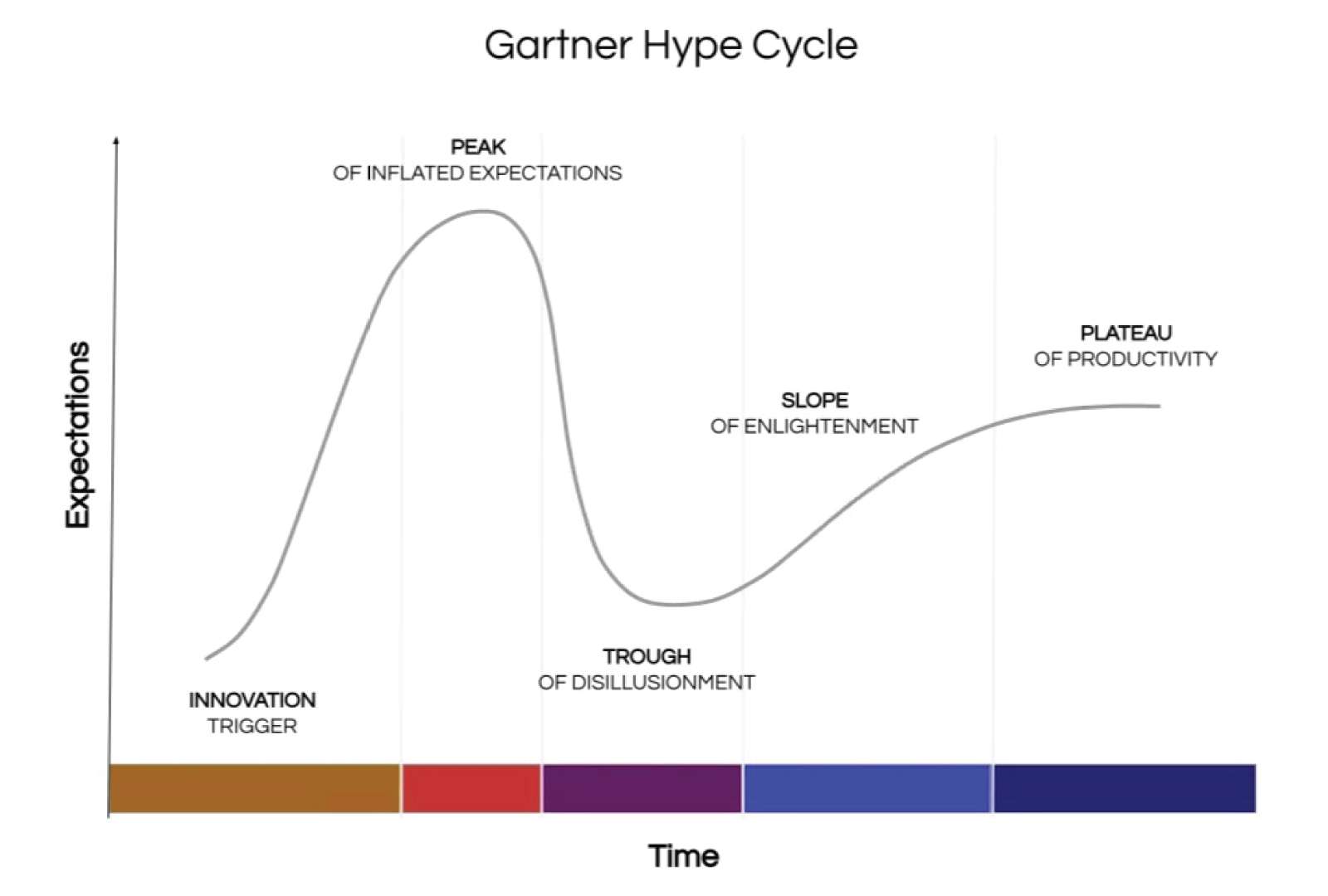

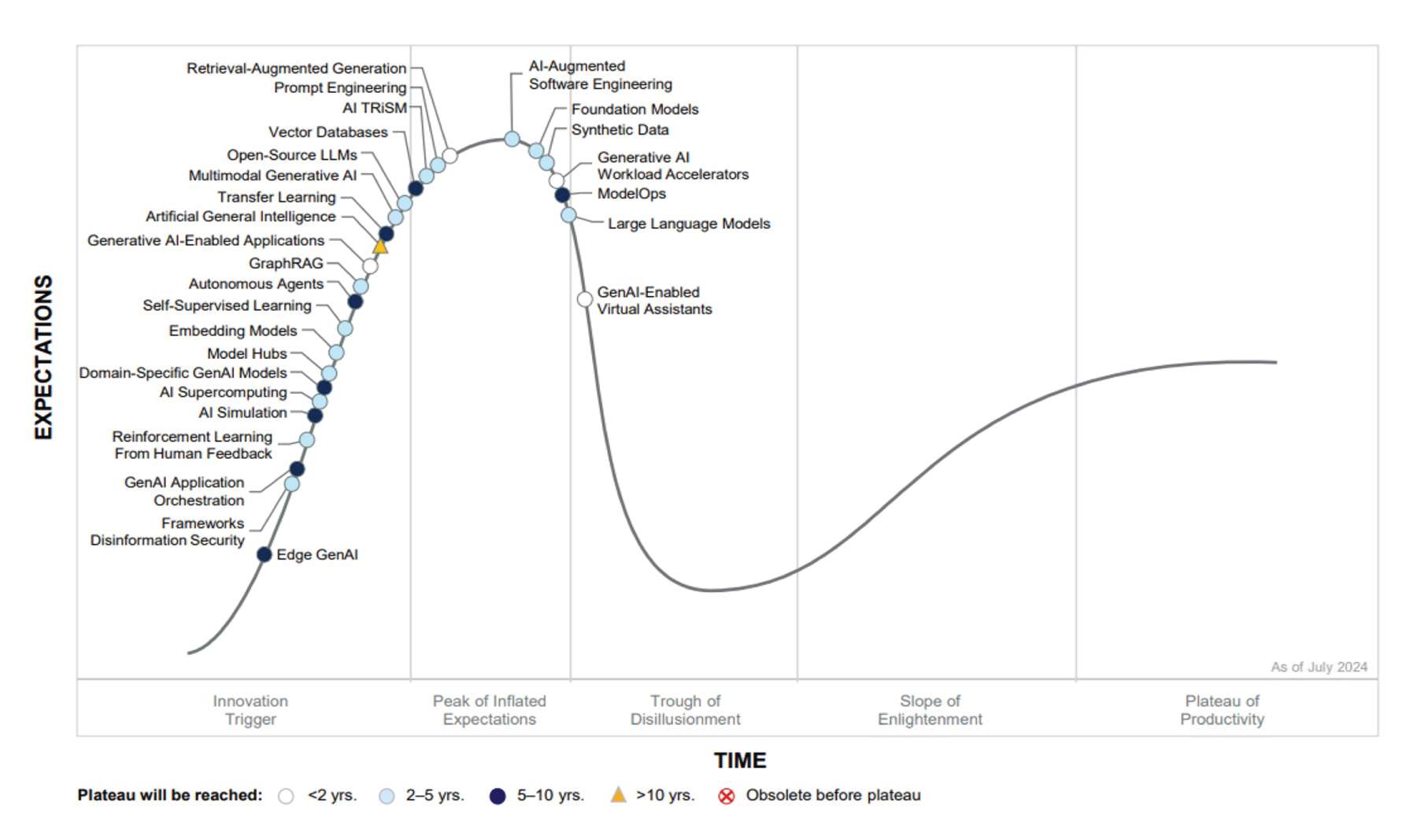

The technology adoption cycle is a well-known process. Gartner calls it the Hype cycle. It’s divided up into distinct phases that most disruptive technologies must travel through as a rite of passage. It starts with some sort of innovation trigger: a new vendor, a news report, an unveiling. Think of Steve Jobs holding up the original iPhone. It is exciting, new, promising the world. It’s the whole internet in your hand! Our excitement grows and reaches the Peak of Inflated Expectations.

We get the iPhone. Once it’s in our hand though the reality sets in and we realised that the technology has drawbacks. It is expensive, has limitations (the original iPhone didn’t have the app store[2]) and that internet you were promised: The original iPhone could not browse the Flash websites we were all using in the 2000s[3]. Our expectations wane. Gradually though, we address these concerns, the costs are lowered, the app store is released, we adjust our habits and stop going to the Flash websites. We eventually incorporate the technology into our day-to-day lives and enter the Plateau of Productivity.

Some technologies don’t make it through the phases. Apple’s other device, the Vision Pro[4], similarly garnered lots of excitement and is an engineering marvel but could not be integrated into our daily lives as intended. The technology has slowly withered away.

Where is AI in this graph? Is it successfully traversing the stages or will it be another Vision Pro?

Gartner AI Hype Cycle

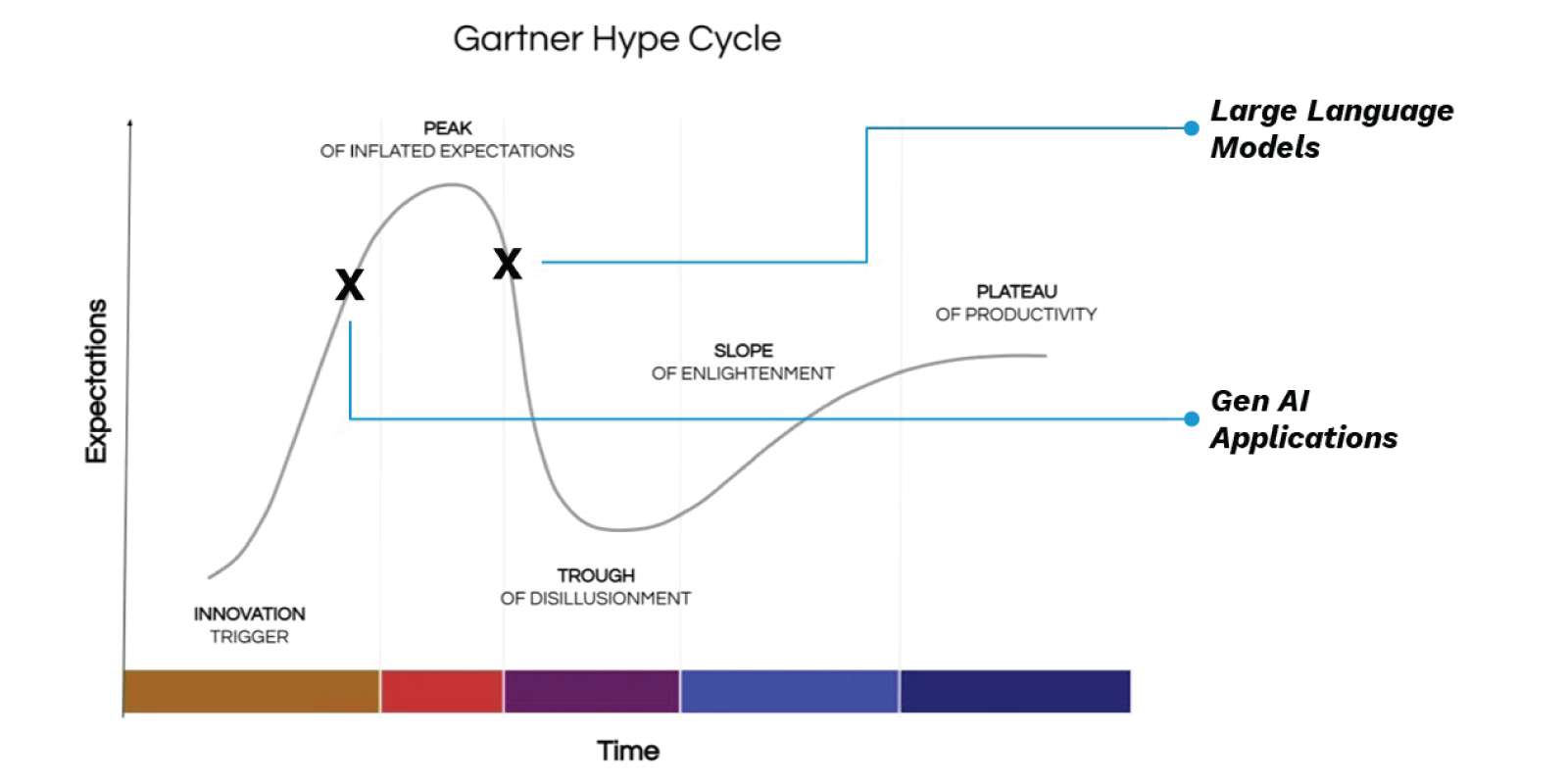

According to Gartner, LLMs are placed past the Peak of Inflated Expectations[5]. It has been released to the public long enough for them to understand its limitations. Hallucinations are the famous example. However, there are issues with costs of ownership and data governance. These roadblocks of reality are dampening the excitement that was made when LLMs were first unveiled a few years ago.

On the other hand, Gartner places Generative AI Applications on the ascendancy. They are still providing excitement in the field. These applications, such as the chat bots, document authoring software, translation software etc, utilise LLMs in a very narrow way for a specific use case. In so doing, these implementations can focus on specific issues introduced by LLMs and minimise their impact. As a result, there is still an expectation that the implementation of LLM software will solve for any inherent LLM problems.

However, by definition, Generative AI applications are based on LLMs. In order to know what features Gen AI applications can implement, we need to know the capabilities of the LLMs it is using.

The Growth of LLMs

LLM releases have come thick and fast. Through an informal survey we can see that in 2024 there were 24 major Large Language Models released. These cover multiple geographies, vendors and use cases. OpenAI by themselves released GPT-4o, GTP-4o mini, o1, o3 and o1-pro in 2024. With the deluge of LLM releases patterns have started to emerge:

- Reasoning LLMs (the GTP o1 and o3 models) have become popular. The LLMs mimic human reasoning. Rather than being a black box with a potentially hallucinated answer, they will explain how they came to that conclusion and illustrate any logical assumptions they have made. In so doing they provide a more trustworthy and transparent behaviour.

- Open Models (the likes of DeepSeek and Llama) allow you to download the weights and to run the LLMs yourself, should you want to. They are attempting to be illustrate how decisions are made and therefore allow users to interrogate their constructions.

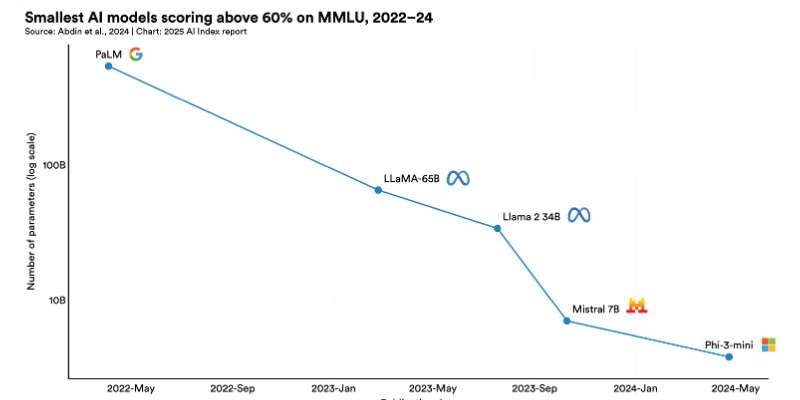

Above all though, the LLMs are getting smarter and smaller. The ‘mini’ LLMs, that is those LLMs that are trained on mere billions parameters, are achieving the same results as those full scale LLMs from a few years ago, such as GPT3.5[6]. The training is therefore becoming more efficient. As a result smaller models tend to be trained more quickly, consume less power and above all are cheaper than their full-scale alternatives.

The Cost of AI

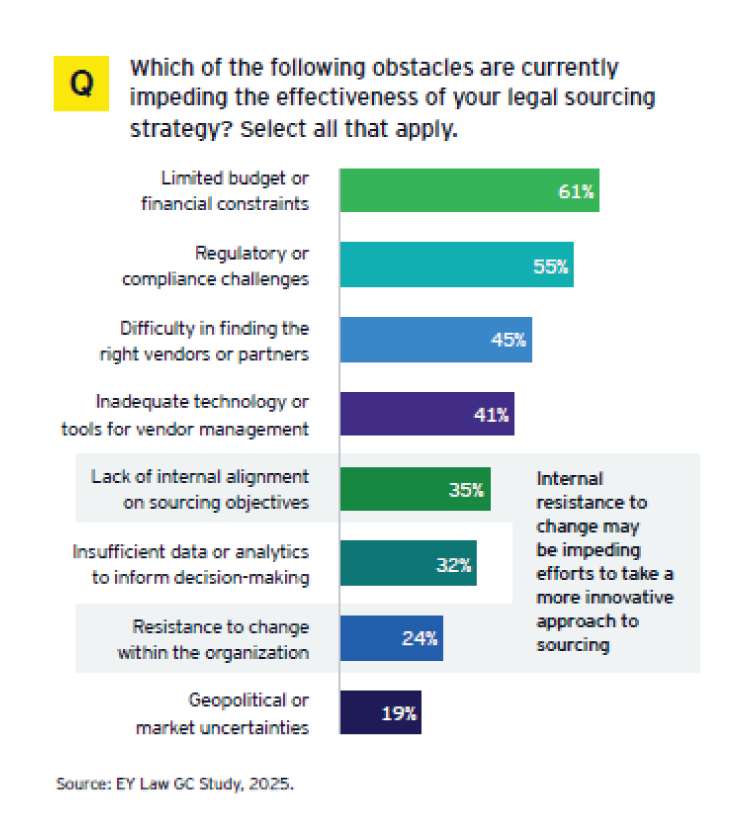

This is the crux of AI adoption in the legal industry. Cost. As much as legal departments want to use AI it is cost that is proving the biggest roadblock. Recent surveys [7]have shown that most legal departments want to use Generative AI but are hampered by budgetary constraints and cost of ownership. The return on investment for AI is difficult to determine and is difficult to justify when compared to cheaper non-AI alternatives.

Traditional processes such as CAL and TAR are well understood and provide just as good results as current Gen AI models. Keyword based searching mechanisms are free and do not incur any per document cost at all. Until the question on the return on investment for Gen AI software is answered the adoption of such software would be low and only for niche edge matters.

The Future

My wife did end up using the AI generated presentation. She may have spent a little more time than she wanted adding more information, but it was ultimately a very useful and fruitful experience. She and I learned a lot. Looking at the rest of the Gartner AI Hype cycle there are several initiatives and technologies sprouting up on the back of the LLMs aimed at addressing the challenges that my wife faced. We already discussed hallucinations being addressed by reasoning models and the issues of cost addressed by mini models, but there a quite a few technologies worth keeping an eye on for the future.

- Domain Specific Models: models built on top of the knowledge regarding a specific field are being developed for just the scenario that my wife encountered. Her topic was biochemistry and European road traffic laws. CoPilot simply did not know any specifics about this field. An LLM based on the PubMed library or some other scientific journal would have resulted in much more accurate information.

- Agentic AI: this technology is so new it was not part of the AI Hype cycle in 2024. Agentic AIs are LLMs that have agency. Not only would they return an answer to a prompt but they may generate some test data and run simulations to gather the results.

The Gen AI field is exciting and vibrant as ever. Though I do not think that it will fall to the like of the Vision Pro it does have some adoption challenges that it will have to meet for it to be fully adopted. This is a totally normal and natural process. These difficult questions must be asked in order for the technology to be fully integrated into our workflows. I have no doubt that in two or three years we would stop talking about AI software and just refer to is as software. We would have moved out of the Trough of Disillusionment and entered the Plateau of productivity.

[1] What is Amara’s Law and why it’s more important now than ever – The Virtulab

[2] A Look Back at the Original Apple App Store – Apple Gazette

[3] Does the iPhone Support Flash?: EveryiPhone.com

Damir Kahvedžić is a technology expert specializing in providing clients with technical assistance in eDiscovery and Forensics cases. He has a PhD in Cybercrime and Digital Forensics Investigations from the Centre for Cybercrime Investigation in UCD and holds a first-class Honours B.Sc in Computer Science. Experienced in the use of industry leading software, such as Relativity, EnCase, NUIX, Cellebrite, Clearwell, and Brainspace, Damir is also a PRINCE2 and PECB ISO 21500 qualified project manager. Damir has published both academic and technical papers at several international conferences and journals including the European Academy of Law, Digital Forensic Research Workshop (DFRWS), Journal of Digital Forensics and Law amongst others.